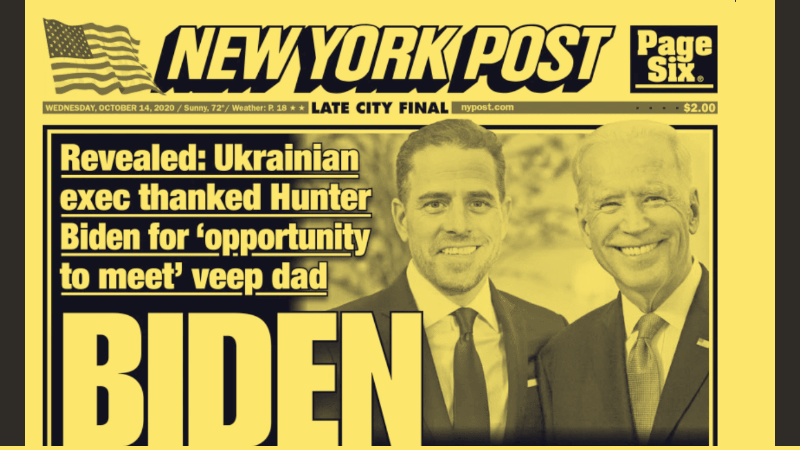

Facebook has decided to limit the distribution of that article, and Twitter is directly preventing it from being shared. Here we explain in detail the information published by the tabloid and why the two networks have doubts about it, but this is also a good moment to tell you why at Maldita.es we believe platforms need to be much more transparent in decisions like these, why they should involve independent fact-checkers, and how they could do better.

When Facebook penalizes content without involving fact-checkers

A few hours after the publication of the New York Post article, a Facebook spokesperson explained that the article was “eligible to be reviewed by the fact-checkers” in its third-party fact-checking program, of which Maldita has been a part since 2019. However, it was the next sentence from that spokesperson that drew the most attention: “In the meantime, we are reducing its distribution on our platform.”

At Maldita.es we were surprised that Facebook limited the distribution of content that had not yet been checked, and that it did so without the participation of external fact-checkers. We wondered how and by whom that decision was made, and based on what criteria. It did not take long for us to see that the director of the International Fact-Checking Network (IFCN), the international alliance we belong to along with more than 70 fact-checkers around the world, was asking the same questions. Many IFCN members also participate in Facebook’s third-party fact-checking program.

How long has Facebook been reducing the distribution of still-unverified content without involving fact-checkers? What happens if a fact-checker later says that the content is accurate? These are some of the questions raised by Baybars Örsek, the general director of the IFCN, and which we share.

We have spoken with Facebook and they explained that the measure is part of their effort to “protect elections.” They said that in some countries, including the United States, they reserve the ability to “temporarily reduce the distribution of content” if it shows signs that it could be false. They say this gives time for an independent fact-checker to work on the content, which can sometimes be a long process.

The explanation is reasonable, but for us the key issue is how that decision is made. Who decides to “temporarily reduce distribution”? According to what criteria? Are those criteria clear, public and transparent? Can content be penalized before an independent verification process has even begun? Is it a unilateral decision by the platform, or does anyone else have a say? How long can that penalty last if no fact-checker intervenes to confirm or debunk the content?

Twitter directly prevents the article from being shared

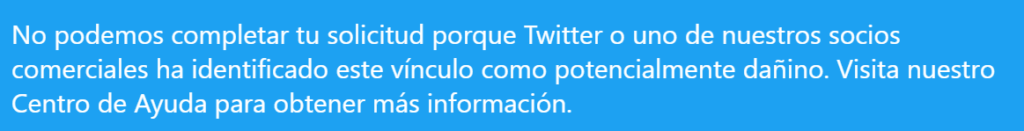

In Twitter’s case, the platform decided to directly prevent users from sharing the article. Twitter does not have a third-party fact-checking program and carries out all of its content moderation on its own, without the involvement of independent fact-checkers.

In this case, the message users receive when they try to share the New York Post article about Joe Biden’s son is: “We can’t complete this request because Twitter or one of our partners has identified this link as potentially harmful.” The company explained its reasons through a corporate account.

Twitter recalled that its terms of service prohibit “distributing content obtained without authorization” and that it does not want to “incentivize hacking by allowing Twitter to be used to distribute material that may have been obtained illegally.” In the case of the article about Biden’s son, the New York Post itself explains that the email in question was recovered from a laptop that someone left at a repair shop and never picked up. The shop owner handed it over to the FBI, but not before making a copy of the hard drive and sharing it with someone in Rudy Giuliani’s circle, an adviser to President Trump, who then passed it on to the newspaper.

Twitter gives this explanation, but the user does not receive it at the moment they are prevented from sharing the article. It is also a decision taken unilaterally by the company, without any kind of external verification. The company itself has acknowledged that “we have work to do to provide clarity when we enforce our rules in this way. We should add more clarity and context when we block Tweets or Direct Messages with links that violate our policies.” Twitter founder and CEO Jack Dorsey said that the company had poorly communicated its decision and that blocking the content without giving “context as to why” was “unacceptable.”

The value of clear and transparent rules

At Maldita.es we believe the problem goes further. Our mission is to fight disinformation, but when decisions are made about what can and cannot be shared, they affect freedom of expression and must therefore be adopted according to absolutely clear and transparent rules for the whole community.

For example, when we rate content for Facebook, it is not removed; it is flagged with a notice showing our rating and linking to our fact-check. In addition, the rated party can appeal and can correct the content so that the rating changes. No platform should be able to allow even the appearance that it is censoring content arbitrarily, evaluating each case depending on the moment.

This is obvious at any time, but even more so during an election period. Social networks have become a public square where citizens share opinions and debate as a society, and that means the rules governing online life must be analogous to the rules offline. We need consistent rules and transparent decisions that are not made in an opaque process, that can be publicly explained and defended, and that allow any citizen to retrace the process by which it was concluded that something is true or false. And for that, we believe independent fact-checkers must always be involved.

At Maldita.es we take our role in determining what is disinformation and what is not very seriously, and we can do that work because we have a very clear methodology that we apply in every case. The decisions taken by Facebook and Twitter in this matter show that platforms still have some way to go, even if at least these two show that they want to do something, unlike others such as YouTube, where disinformation in Spanish, for example about COVID-19, continues to spread freely without any action being taken.